Curriculum-Based Measures in Math

A couple years ago a scientist posted a hilarious TikTok video using this song highlighting our failure to think about measurement in research. I am bringing it back to the surface to highlight how this issue comes into play with our school-based assessment of student performance.

Curriculum-Based Measures

I’m not going to go into depth on the history of curriculum-based measures and their purposes in schools. Please see my previous post for a recap.

There are 3 major areas I want to highlight before discussing the research on math curriculum-based measures.

Lynn Fuchs Framework

The first stage is to identify if the measurement tool can provide psychometrically valid scores at one time point.

Does the measure appear to produce reliable scores?

If administered close together, does the measure appear to produce scores that correlate with other math measures of math achievement that are meaningful?

If administered with a time lag (e.g., couple months or many months), does the measure predict student scores on another measure of math achievement that is meaningful?

Can the measure consistently predict students who are truly at-risk for not meeting end-of-year goals AND consistently predict students who are not at-risk for not meeting end-of-year goals?

The second stage is to identify if the measurement tool can provide psychometrically valid scores when considering growth rates on the tool across time.

Does the measurement tool produce reliable scores? This becomes difficult because you must consider the individual student’s performance on a measurement by measurement basis.

Does the measurement tool produce a rate of growth that predicts student scores on another measure of math achievement that is meaningful?

Is the measure sensitive enough to document student learning in short time frames to inform teachers so they can adjust instruction?

The third stage is to identify if the measurement tool is usable.

Can teachers adhere to the administration and scoring protocol with fidelity?

Do teachers find the administration and scoring protocol feasible within their instructional day?

Alignment

A key principle of curriculum-based measurement is that the items and content actually reflect the instruction that is occurring. I cannot emphasize enough how great the ABCs of CBM book is in highlighting how alignment is more than just content, but also how items are formatted, directions are read, and the topography of student responses that are scored.

Standardization of Measurement

Standardizing the measurement process reduces the impact of confounding variables (e.g., time of day, person administering item, small group versus large group) adding measurement error and reducing the usefulness of data to inform the benefits of instruction. Similarly, some are recommending just observing students during math tasks or perhaps interviews of student math thinking as supplements to a curriculum-based measurement approach. These alternatives can add useful information to inform instruction! But as a stand alone approach they have too much error. If tasks are differing a lot in content or topography in how students respond it is difficult to gage learning across time. If you have student verbalizing their thinking then you might be adding construct irrelevant variance. Meaning, you begin to pick up kids ability to verbalize students thinking and not their ability to do math.

Early Numeracy

Reviews have evaluated the psychometrics of curriculum-based measures for early numeracy (Foegen et al., 2007; Nelson et al., 2023). There are several measures used in the early numeracy domain that appear to be useful.

Number identification. In this measure students are presented numerals in a randomized fashion and students are tasked with verbally stating the number that is present. This is typically administered 1:1 in a timed fashion so data are presented to represent fluent responding (i.e., number correctly identified per minute). During administration if a student gets stuck for 3 seconds the teacher will give the correct answer and tell the student to go to the next one.

Oral counting. In this measure students are prompted to start counting from 1 and to count as high as they can go. This is typically administered in a timed fashion so data are presented to represent fluent responding (i.e., numbers correctly counted in sequence per minute). During administration if a student gets stuck for 3 seconds the teacher will give the correct next number and tell the student to count the next one.

A related counting measure is one to one correspondence. The only difference with this measure is students have to touch one dot and say the next counting number.

Missing number. In this measure there is a string of 3 (or 4) numbers in a row but one is missing. For example: 6, __, 8, 9. The student needs to identify “seven” would complete the sequence. This is typically administered 1:1 in a timed fashion so data are presented to represent fluent responding (i.e., missing numbers correctly provided per minute). During administration if a student gets stuck for 3 seconds the teacher will give the correct answer and tell the student to move to the next item.

Quantity discrimination. In this measure there are two numerals side by side. For example,

The student is typically tasked with pointing to the greater number. This is typically administered 1:1 in a timed fashion so data are presented to represent fluent responding (i.e., greater numbers identified per minute). During administration if a student gets stuck for 3 seconds the teacher will give the correct answer and tell the student to move to the next item.

These four measures may be helpful to be used in combination for screening type decisions. For example, teachers can administer all four measures and identify if the student’s score is above or below a pre-determined cut score. An example of this might be identifying a student identified 24 digits correct per minute in the fall of kindergarten. Using cut scores provided by Chard et al. (2005), the cut score in fall of Kindergarten for number identification is 14. Thus, the child would be identified as not at risk. Although it is promising to see the potential of these measures being used for screening type decisions, one challenge identified with missing number and quantity discrimination is for progress monitoring. Both missing number and quantity discrimination have been shown to have really shallow slopes of progress for students in Kindergarten and 1st grade. Thus, teachers would need multiple weeks of data before they’d even be able to identify student learning. This is not ideal to inform whether students are responding to instruction, or intervention.

Elementary

Reviews have evaluated the psychometrics of curriculum-based measures in elementary grades (Christ et al., 2008; Foegen et al., 2007; Nelson et al., 2023). There are three predominant types of measures used in the elementary grades that appear to be useful.

Basic facts. These couple in a variety of flavors. For example:

Sums to 10

Sums to 18

Subtraction with max minuend of 10

Subtraction with max minuend of 18

Multiplication with max factor of 9

Multiplication with max factor of 12

Division with max dividend of 81

Division with max dividend of 144

Another issue to consider about the type of measurement tool used is whether it is a single-skill or multi-skill measure. For example, I could have a measure that ONLY includes items that are sums to 18. This would be a single-skill measure targeting these basic facts. Conversely, I could have a measure that includes items reflecting sums to 18 AND subtraction with a max minuend of 18. This would be a multi-skill measure because both addition and subtraction facts are included.

Typically, these measures are administered classwide (or in a group setting if it is part of intervention) in a timed fashion so data are presented to represent fluent responding (i.e., correct digits per minute). You may notice that the fluency data are not problems correct per minute but rather digits. The logic around this is all about enhancing the sensitivity of the scores being collected. For example, 4 + 5 = 9 would be scored as 1 correct digit whereas 4 + 8 = 12 would be scored as 2 correct digits.

Generally, the single-skill measures will be more sensitive to change on a week to week basis (and honestly gains can be found on a session to session basis) because the set size of items is restricted. Thus, a 2 min administration will provide useful information on student learning. If a multi-skill measure is used, the set size is increased. Thus, increasing the length of time for administration (e.g., 4 min) will enhance the utility of the score to identify learning gains that occur.

I have two last parting thought on basic fact measures.

First, the potential utility of cloze measures of basic facts intrigues me despite the limited research at this point in time (see Jiban & Deno, 2007 for one study). In a cloze problem the unknown quantity can be any of the items that make up the math fact. For example, if we focus on the fact 8 x 6 = 48. There are 3 potential items: (1) 8 x 6 = ___ (this is the item that would be included in a basic fact measure), (2) __ x 6 = 48, or (3) 8 x __ = 48.

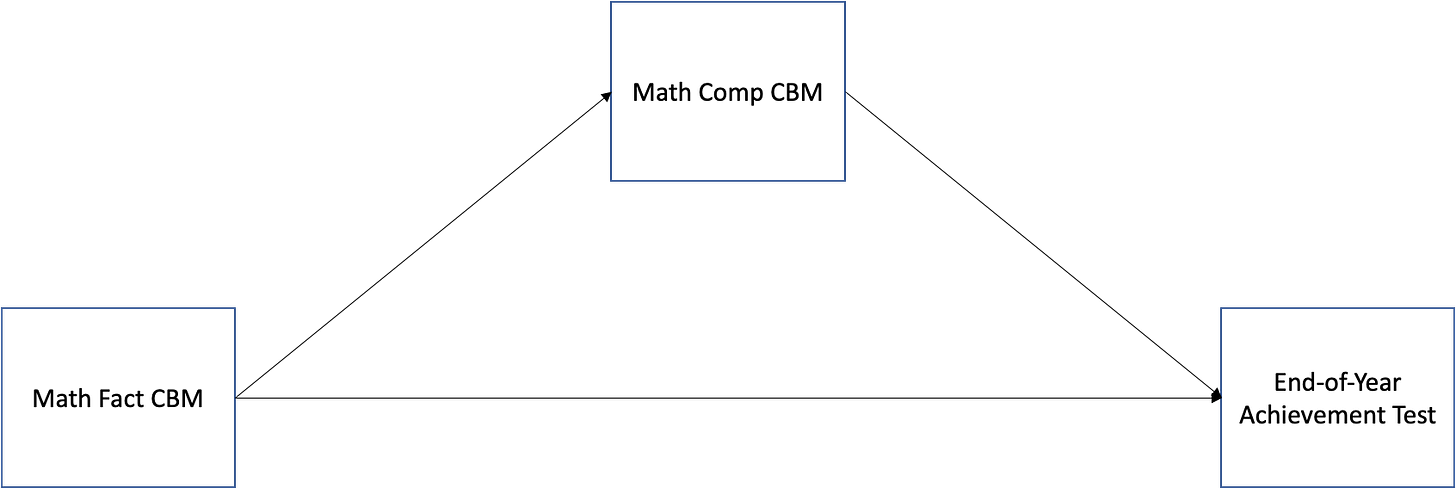

Second, in some research with upper elementary researchers will build a model that includes multiple CBM measures to see what best predicts the end-of-year math achievement test. For example, they might include a concept and application CBM (see below), math fact CBM (see above), and math computation CBM (see below) and see how much of the variance in math achievement scores each predicts. They will then say something like, math fact CBM predicted the least amount of variance so its utility is not as beneficial for older students. I have some concerns with this line of reasoning. My concern lies in the way the statistical model is tested.

Testing the mediated relation such as the model above would likely be a more accurate way to evaluate what is occurring. Math fact knowledge will be predictive of end-of-year achievement test. It clearly will not explain ALL of the variance because end-of-year achievement tests will aim to measure content covering the scope and breadth of the state standards. However, its actual predictive value and then teachers’ interpretations of how important it is to progress monitor and track gains on basic facts get eaten up by the math comp CBM measures. Thus, my recommendation for teachers is to ensure they use both a basic fact CBM (until they determined students have mastered all facts) AND the math comp CBM – rather than just using a math comp CBM.Computation. These come in a variety of flavors as well!

Multi-digit addition (2 digits x 2 digit)

Multi-digit addition requiring regrouping (2x2)

Multi-digit addition (3 digit x 3 digit)

Multi-digit addition requiring regrouping (3x3)

Multi-digit subtraction (2 digit x 2 digit)

Multi-digit addition requiring regrouping (2x2)

Multi-digit subtraction (3 digit x 3 digit)

Multi-digit subtraction requiring regrouping (3x3)

Multiplication multi-digit

Division multi-digit

Similar to basic facts, it is important to think about the type of measurement tool you aim to use given your goals: single-skill or multi-skill. The single-skill measure will be more sensitive to identify changes on a week to week basis (or session to session), but will not function as well as a stand alone screening measure because it is a very discrete amount of items compared to the scope of possible items for that grade-level. Conversely, the multi-skill measure will be better for screening if all computational items included on the measure reflect the potential content for that grade span. However, it might not be as sensitive in picking up student learning on a week to week basis.Similar to basic fact CBMs, these measures are administered classwide (or in a group setting if it is part of intervention) in a timed fashion so data are presented to represent fluent responding (i.e., correct digits per minute). Once again, items are typically scored as digits correct per minute rather than problems correct per minute.

Conceptual and application. The last type of measure used frequently are concepts and applications. These are typically constructed by randomly pulling items that reflect the standards students are expected to learn during that grade. The actual construction of each probe sheet will be balanced, meaning standards that are a larger focus for that grade level will have more items than standards that are less of a focus.

Typically, these measures are administered classwide (or in a group setting if it is part of intervention) in a timed fashion so data are presented to represent fluent responding (i.e., correct problems per minute). Because the breadth of content included on these measures is wide, administration timing is oftentimes longer (i.e., 6 to 9 min) compared to the time limit for computation or basic fact CBMs.

Concepts and application CBMs oftentimes have the strongest utility as screeners because of the design of the measurement tool to reflect ALL of the content students are expected to learn that school year. The drawback to this is the concepts and application CBMs can be challenging to use for progress monitoring. On a week to week basis in may be difficult to identify student learning because the probes include items that reflect the entire year’s content.

Parting Thoughts

There is less research on CBM tools for secondary math content. I will write a follow up post to this one on potential measurement tools that may be used.

Computer adaptive testing has become all the rage. It sounds so shiny and exciting, the computer will generate different items to students based on correct or incorrect responding in order to identify their exact knowledge. I have four major concerns related to the use of computer adaptive tests.

First, in practice they can take a lot of time. I have witnessed many schools use computer adaptive testing for screening-based decisions and it will take students between 30 to 45min to cap out on the test and obtain a score. For some teachers, this can take 1 to 2 entire class periods to finish up screening. If this is completed three times a year that is anywhere between 3 to 6 class period of instruction lost to screening - not good.

Second, the measurement tools are smart and will flag students who might be fast clickers - they just click anything to get through the test. But if school personnel are not savvy to this then they are using invalid data for a student to gage their need of supplemental instruction. Even if school personnel are savvy, it then would require even MORE lost instructional time for that student to retake the screening measure and put forth their best effort. The same problems can occur with the CBM measures I mentioned above, but we are talking about 2 to 6 min of time lost rather than 30 to 45 min.

Third, score interpretation is not straightforward. Most of the computer adaptive measures will produce a RIT score. A teacher will ask me, “what the hell does RIT = 145 mean?” Remember good ol’ Art Dowdy - I prefer things that are easy to interpret. Stating a student solved 34 correct digits per minute on a math CBM seems like a more interpretable and then actionable step on how to enhance that child’s learning.

Four, the actionable steps to take is not always straightforward. The computer adaptive tests will often give score breakdowns based on content domains. For example, it might say something like the student scored strong in Operations and Algebraic Thinking but lower in Measurement and Data. Yet, the number of items the student actually answered that fit within each of these domains is oftentimes very limited - thus interpreting these subdomain scores to guide instruction is not a tenable decision.

If you want to see a conversation with two authors of an amazing article on CBMs you can watch it here.

Hi Corey, Have you gotten a chance to look into measurement tools for secondary math content yet? I currently teach Algebra 2 and am just now learning about CBMs for a grad school course. I would be curious to learn more about CBMs at the secondary level.