Let me express my conflicts of interests and biases up front. This is integral for anyone reading this to know where I am coming from up front to understand the lens in which I am writing from.

I went through a general education, teacher preparation program in elementary education

I taught as a general education teacher (5th grade) in an elementary school

I am a colleague (i.e., working relationship) with 4 of the 6 authors of the Witzel et al. (2023) paper

I currently work in a special education faculty position at an institute of higher education

I have previously been a member of both the National Council of Teachers of Mathematics and the Council of Exceptional Children

I was a reviewer on the Witzel et al. (2023) paper

Research Methods

In my opinion, something that is not discussed enough is how locked in researchers get to specific research designs. Let’s do a quick tutorial on research designs fit under some umbrella terms. I am going to couch the definitions specifically to eduction and then provide examples aligned to a similar phenomenon.

Descriptive Research. This aims to describe the variables that are occurring within an educational context. This could include survey data, direct observation, or case study research.

What types of activities and instructional approaches are teachers using related to word problem solving instruction?

What activities do teachers report they use for word problem solving instruction?

What strategies do students use to solve word problems as they engage in schema-based instruction?

Relational Research. This aims to describe the relation between variables that are occurring. This could include correlational or other (e.g., structural equation modeling) research approaches aimed at identifying relation between variables.

How do cognitive factors and domain specific skills predict word problem solving performance of students at-risk or identified with a math disability?

Qualitative research. There are a host of methodologies within qualitative research. A similar theme of this umbrella domain is to provide rich descriptions about a phenomenon of interest.

What do teachers report as barriers to support students word problem solving performance?

What are students’ perspectives on using the new strategy to solve word problems?

Experimental research. Examine a functional relation between an independent variable and a dependent variable. Worded another way, does the manipulation of an instructional practice lead to changes in math learning while attempting to control for confounding threats to this claim through scientific principles?

Does schema-based instruction improve the word problem solving performance of elementary aged students receiving supplemental math instruction in a resource room classroom?

The 4 umbrella research domains I provide above are integral to understanding a phenomenon. I hope my sample research questions under each domain highlight that! If we want to truly understand the phenomenon of students engaging in word problem solving all of those research questions are integral for us to understand that phenomenon (furthermore more questions could be asked that I did not include)! Yet, oftentimes researchers may overly focus on one type of question. I am guilty of this! I built knowledge and expertise in single-case designs (#ScrdChat) and systematic reviews. So what do I do? A lot of those! Yet, this expertise limits my ability to ask different research questions because of (a) what research questions I ask and (b) what I prioritize.

Tan et al. Review

The Tan et al. review was conducted by scholars who self identified as “Disability Studied in Education Scholar”. The goal was to evaluate studies and whether they aligned or departed from “humanizing mathematics education.”

The authors truncated search for articles from 2006 through 2016. This is concerning to me for two reasons. First, the rationale for truncating to 2006 does not carry substantial reasons. Why would prior articles not be able to contribute to the knowledge? Second, the articles truncated search to 2016 (hello, y’all it is 2023?). We are missing 6-7 years of publications. I know systematic searches can lead to delayed publication but this is the longest time span I have ever seen.

Why does this matter? Literature that is relevant to the conversation is excluded because of systematic decisions made by the author team without strong justification. They cite prior reviews that “covered” literature but those may have had different inclusion criteria, coding guides, and disposition towards literature base so excluding on that rationale is concerning.

The authors included the following

For this current review, we intend to avoid, to the extent possible, conventional divisive boundaries in education (e.g., general education vs. special education), but instead engage educators, researchers, policy-makers, and activists broadly, and in particular those who have an interest in humanizing mathematics education for and with disabled students. In our search and, subsequently, in the analysis, we did not delineate articles that fit into a particular field of study, such as mathematics education or special education. Thus, what we consider as mathematical educational research encompasses contributions from multiple fields of study. Moreover, our search for articles was not intended to be exhaustive but to capture an adequate sample of studies for analysis and address our research questions.

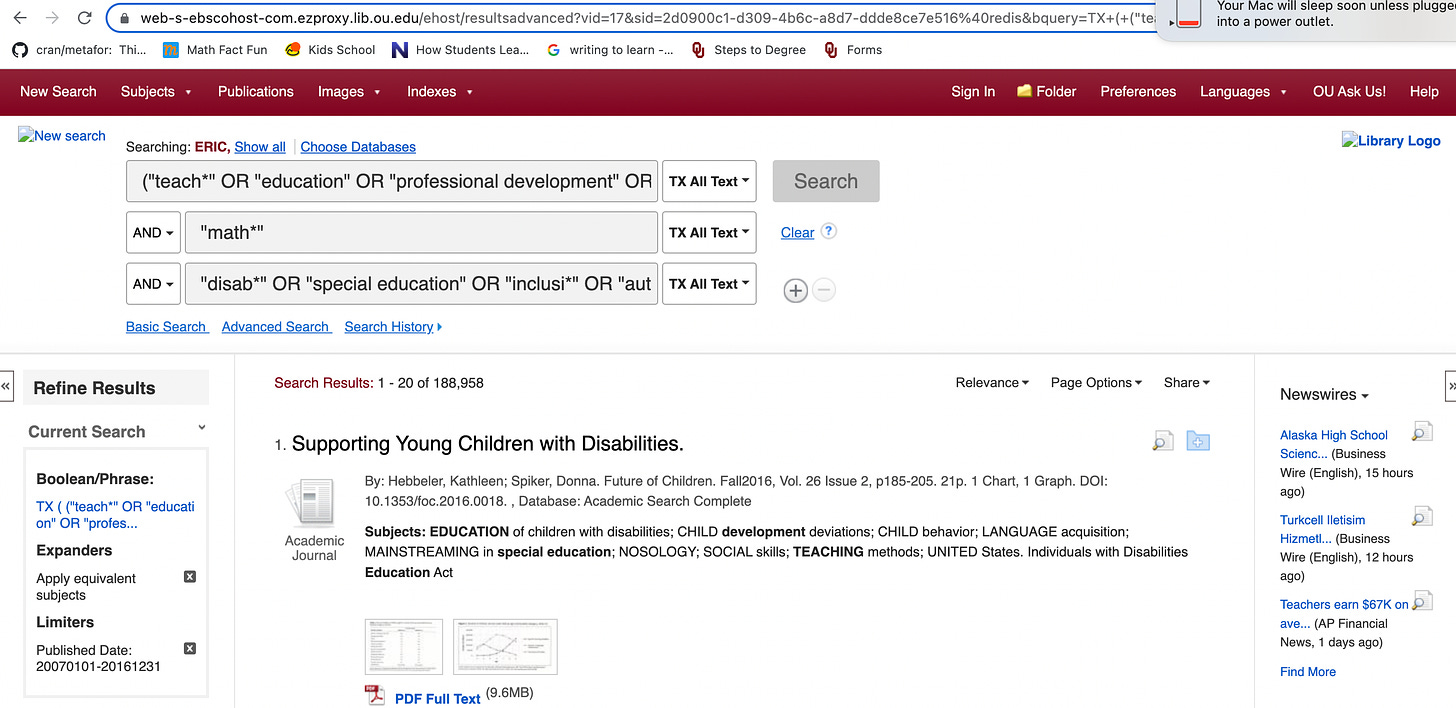

Then they provided some key terms. I attempted to rerun the same search using the keywords they provided along with date limiters through EBSCO. I ended up with 188,958. I did not search through PsychInfo because I would have to use ProQuest. Yet, this thoughtful exercise highlights the authors failed to provide the actual Boolean string they used to find the articles they aimed to include and brings questions to how they drew conclusions from the literature if I cannot even find what they included through a search (see below).

The authors claim their goal is to the following, “Thus, the unit of analysis was primarily the effects of the interventions on student outcomes (e.g., scores on assessments) while excluding any educator constructs in the analysis of the intervention (e.g., teacher growth, knowledge, or decision-making)” (pp. 879-880). Yet, the authors claim they, “excluded all studies that only measured math knowledge acquisition” (p. 879). It is impossible to know how many were excluded for this criteria because the authors do not report this information in the flow diagram (see p. 879). I am confused at how the mission is listed as student learning and authors excluded studies that measured student learning?

The authors stated, “We added two additional studies (Eriksson, 2008a, 2008b) through a hand search of the Journal of Mathematical Behavior, a prominent journal in mathematics education that did not turn up in our original search but that we knew from experience had published disability-related articles.” It is common to hand search journals that have published articles that were included in the initial review. Yet, the authors appeared to only search one journal (?) without a clear rationale and failed to search additional journals. I can think of a plethora of journals that publish, “disability-related articles” and “math” - for example here are some that may be of consideration

Journal of Special Education

Remedial and Special Education

Learning Disabilities Research & Practice

Learning Disability Quarterly

Exceptional Children

Exceptionality

Journal of Learning Disabilities

Behavioral Disorders

Journal of Emotional and Behavioral Disorders

Focus on Autism and Other Developmental Disabilities

Rural Special Education Quarterly

Learning Disabilities: A Contemporary Journal

Preventing School Failure: Alternative Education for Children and Youth

Journal of Applied School Psychology

Insights on Learning Disabilities: From Prevailing Theories to Validated Practices

Journal of Special Education Technology

Journal of Behavioral Education

Research in Developmental Disabilities

Education and Treatment of Children

Journal of School Psychology

Psychology in the Schools

School Psychology Review

Um…I hope that list above highlights how crumby their journal hand search was

Another issue. In most systematic reviews it is common to do a first author search. For example, searching for all publications by the first author to see if they fit inclusion criteria. This makes sense because most authors engage in a systematic theme of research. Second, it is common to do an ancestral search. This would include looking through the reference list of all included studies in the review to see if other studies that were cited in these studies would meet the inclusion criteria for the review. This would make sense because the primary study might cite related studies that are similar. Last, a forward search is typically run. This includes searching all studies that have cited a primary study included in the review. This would make sense because the primary study might be cited by another similar study that would fit inclusion criteria for the current review. The authors did none of these things.

Why does the search matter? Well, if you obtain a sample of studies included in your review that is systematically unrepresentative of the TRUE available literature on the topic all your conclusions are bias. Because there are systematic differences in what you included based on your inclusion criteria and what is ACTUALLY available to meet your inclusion criteria. Furthermore, in the Tan review they arbitrarily excluded studies that, “excluded all studies that only measured math knowledge acquisition.” This is weird given their focus? Furthermore, I have many questions on this decision. Many single-case designs I have read testing math interventions include social validity data from students and teachers on the “goals,” “procedures,” and “outcomes” of the study. I am unsure if single-case designs would be included or excluded if they included these data as part of the experiment.

The authors developed a coding guide a priori (cool!) with reference to prior theory research (cool!). The authors made some adjustments to their coding guide as they walked through coding with justifications (cool!). The authors provided ZERO data to determine if agreement on codes for articles could be agreed upon through independent evaluation (not cool!). Why does this matter? Well, putting an article into a specific category as “humanizing” or “dehumanizing” should be agreed upon given the operational definition for the terms with examples and non-examples by independent researchers. This threshold was not reached. Thus, the authors are asking we are to trust their independent evaluation through consensus given their self-identified dispositionality statement on who they identify as researchers.

This paragraph is chock full of content

Although the focus of studies in the dehumanizing waves topology is on educators, disabled students are not positioned as mathematics doers and thinkers. Disability as a deficit remains the central focus of these studies. For the most part, these studies aim to indirectly respond to deficits through “fixing” children, using a limited range of intervention approaches. These approaches are typically educator centered, with utilization of explicit and/or direct instructional strategies that do not position students as mathematical thinkers. Gersten and colleagues (2009) describe explicit instruction as follows: first, the educator models the “step-by-step plan (strategy) for solving the problem” that is specific for a set of problems, followed by asking students “to use the same procedure/steps demonstrated by the teacher to solve the problem” (p. 1210). We characterize such strategies as medicalized, ableist, and acultural, whereas students are not perceived as mathematics doers and thinkers and cultural beings capable of constructing and reasoning with mathematics concepts. Systemically, these strategies reflect the notion that “oppression makes it necessary to control how oppressed people think” (Camangian & Cariaga, 2021, p. 2). Studies in this topology focused on the targeted professional learning of direct instruction approach or endorsed such an approach.

Note, authors include the following caveat to the statement above as a footnote on p. 899, “Direct instruction is not in and of itself ableist in all contexts; however, when only one pedagogical approach is applied to all disabled students regardless of their understanding and/or the mathematics at hand, we see this as ableist. Nondisabled students are not given the same limitations on how they can learn mathematics.

I have a couple questions. Is teaching a child how to convert an exponent to repeated multiplication to solve the problem ableist? Is teaching a child how to count on from a number (e.g., 6) ableist? Is teaching a child how to solve a multi-digit addition problem through the standard algorithm ableist? Is teaching a child how to find the perimeter and area of a rectangular prism ableist? Is teaching a child how to write and read a decimal to the hundredths place value ableist? Is teaching a child how to read and represent a fraction in an area model, length model, and set model ableist? Is looking up how to fold in egg whites on YouTube ablesit? Is looking up how to change a tire on YouTube ableist? Is looking up how to fill out a W-4 for work ableist? Is pursuing driver education to learn to drive a car ableist? I’m unsure how someone teaching someone a skill through modeling and then feedback on independent performance of the skill as an ableist approach to learning?

My overall take. Given the authors initial aims (p. 879)

Articles had to (a) be published in English or included a translated English version in peer reviewed journals; (b) focus on educators of mathematics, such as prospective and practicing K–12 teachers, teacher educators, researchers, and mathematics as central units of analysis; (c) include issues of disability as a focal topic (e.g., disabled students, special education); and (d) be original empirical studies. Thus, we excluded review or synthesis of research, conceptual and theoretical articles, opinion pieces, and examples of and reports on practices or programs.

The authors excluded a bunch of research that “should” have fit their inclusion criteria but for whatever reason they opted to exclude it. This inhibits what we can conclude of what is known because the inclusion of articles is unsystematically representative of what should have been included. Second, the search procedure was impossible to replicate (I tried). Third, the coding procedure does not build any substantial evidence an independent team would reach the same coding conclusions as the author team (they did not even provide evidence the author team could reach independent agreement).

Where does this leave us?

I encourage readers to see a commentary on the Tan et al. study here. Here are my thoughts.

I am discouraged that an article was published in a premier journal focused on systematic reviews in education that had such limitations to its methodology. This hurts science

I am discouraged that in many circles we seem to be talking past one another

I am encouraged that all people claim to be focused on enhancing students math learning outcomes

I am hopeful we can discuss what key outcomes we want students to achieve related to math. If we can agree upon this, it can then dictate research and practice that will steer us in a similar direction

I am hopeful that my field of special education will critically evaluate ALL types of research to further understand phenomenons related to math difficulty to understand to how best support student learning

I am hopeful that “math education” (I use this term broadly) will be open to communicating about the knowledge generated through intervention research in special education to bolster Tier 1 (general education) instruction for all students in mathematics